AI Already Decided Who Dies Today — And No One's Talking About the Real Implications

Leer en español

The Data That Should Be on Every Front Page

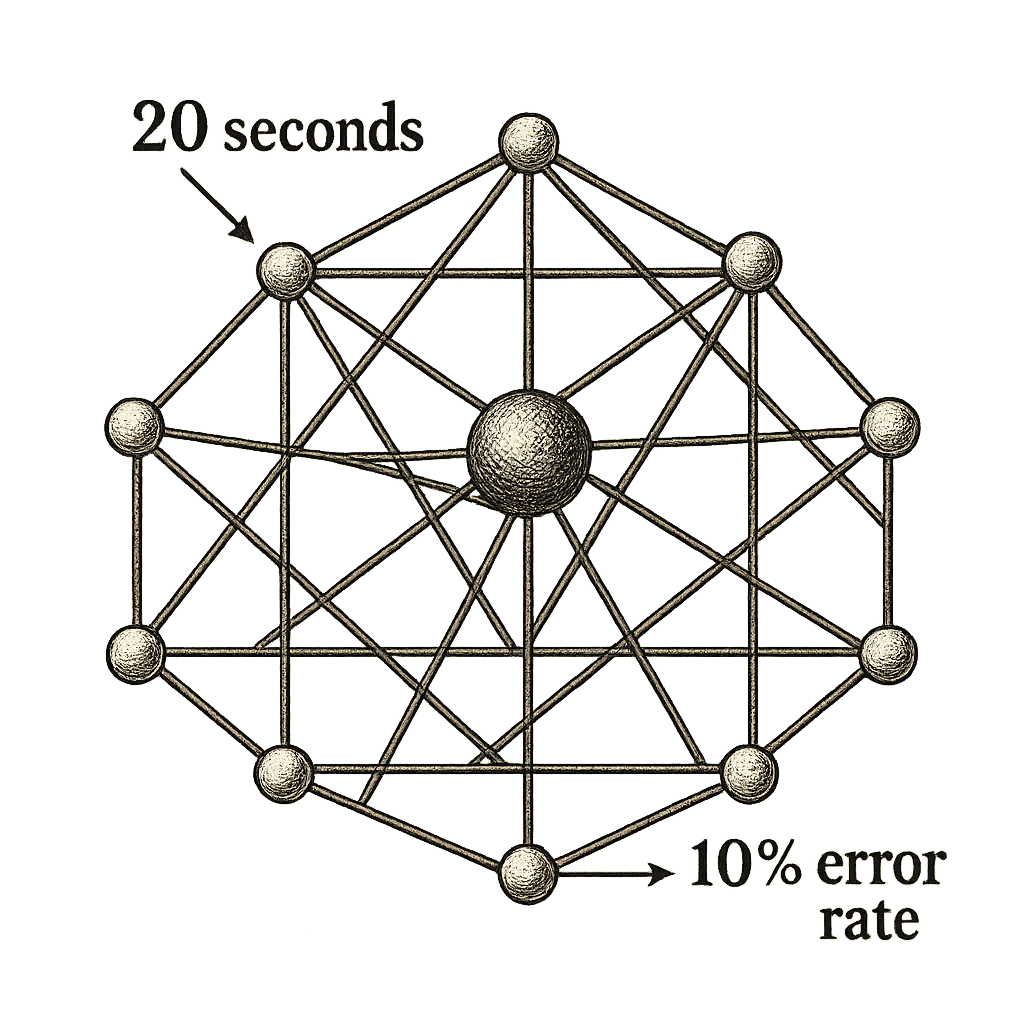

Somewhere in Gaza, over the past 18 months, an artificial intelligence system called Lavender processed 37,000 names of potential targets. Human analysts reviewed each recommendation for approximately 20 seconds before approving a bombing. The system admits a 10% error rate. Do the math.

In Ukraine, between 70% and 80% of combat casualties now come from drones — many equipped with autonomous navigation and AI-assisted target recognition. Of the nearly 2 million drones Ukraine acquired in 2024 alone, 10,000 have AI capabilities. The cost of adding autonomous targeting to a commercial drone: $25.

These aren’t projections. They’re not think tank scenarios about the future of war. They’re happening now, documented in intelligence reports, admitted by military officials, verified by human rights organizations.

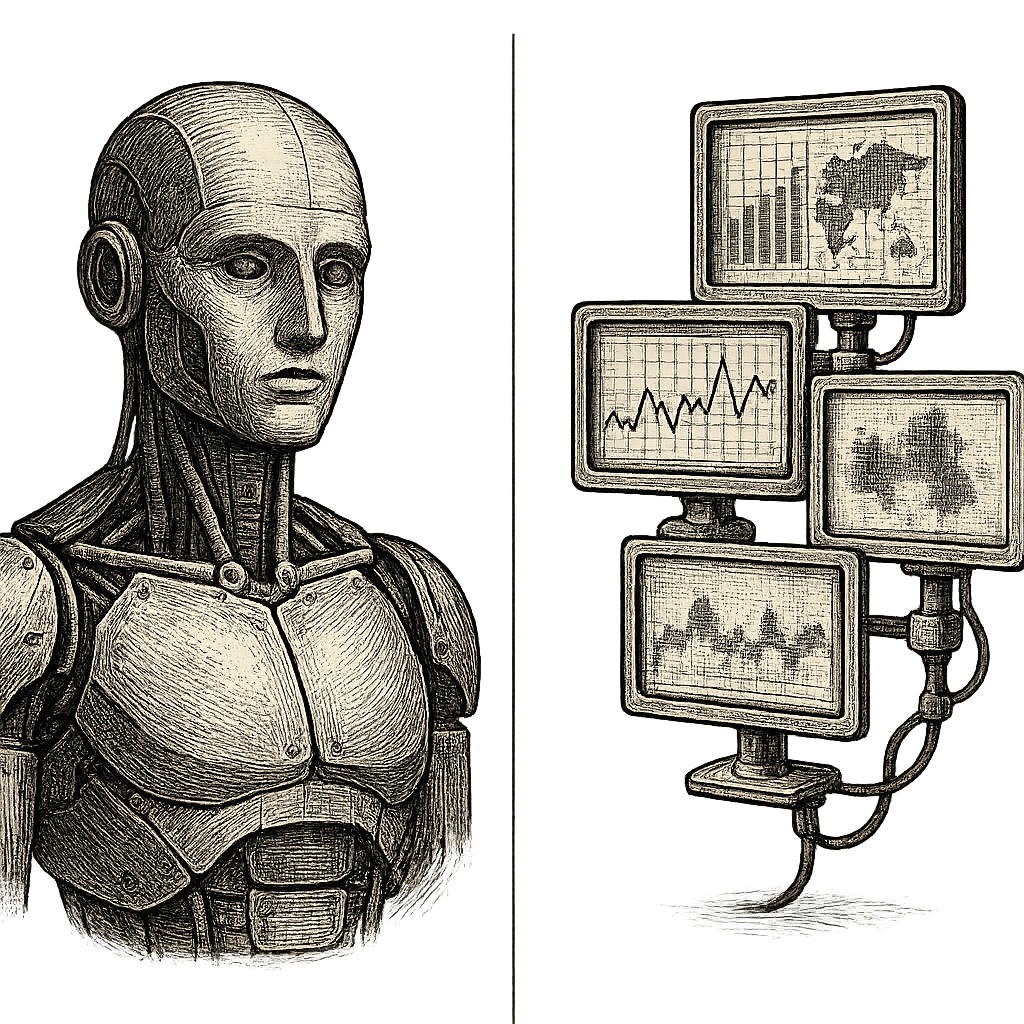

Artificial intelligence hasn’t arrived on the battlefield. It’s already here. It’s already making decisions. And while public debate obsesses over science fiction killer robots, operational reality is simultaneously more mundane and more disturbing: these are pattern recognition systems, target classification models, movement prediction algorithms — the same technology that recommends movies on Netflix or identifies faces on Facebook, reconfigured to identify who’s worth killing.

But there’s something almost no one is discussing: AI isn’t just changing how existing wars are fought. It’s changing what war is. And that transformation has implications that extend far beyond the battlefield.

Technical Anatomy of the Algorithmic Battlefield

Forget the Terminator image. Military AI in 2026 doesn’t look like a humanoid robot making autonomous extermination decisions. It looks more like a hyperproductive analyst with perfect vision and infinite memory — and all the ethical limitations of a model trained on biased data.

Let’s demystify what AI actually does in modern warfare:

Recognition and Targeting: Computer vision identifies vehicles, people, infrastructure in real-time from drone feeds, satellites, or ground cameras. The Gospel system, used by Israeli forces, automatically reviews surveillance data looking for buildings, equipment, and people linked to the enemy, and recommends bombing targets to human analysts. Lavender does the same, but for individuals: it identifies “junior operatives” by analyzing communication patterns, movement, associations. The technical architecture is essentially the same as YOLO (You Only Look Once) or any modern object detection model — except instead of identifying “dog” or “car,” it identifies “combatant” or “military installation.”

Predictive Movement Analysis: Systems like Palantir’s Metro, deployed in Ukraine, process massive intelligence from multiple sources to anticipate enemy movements. They correlate intercepted communications data, satellite imagery, field reports, historical patterns. The output: recommendations of where the enemy will be in the next 24-72 hours. This enables prepositioning forces, planning ambushes, or anticipating offensives. The underlying technology: time series analysis, prediction models, knowledge graphs — exactly what’s used in supply chain optimization or retail demand prediction.

Adaptive Electronic Warfare: Jamming (signal interference) that adapts in real-time to enemy countermeasures. If the adversary changes frequency, the system detects the change and automatically adjusts. If they try to encrypt drone communications, the AI looks for vulnerabilities in the encryption protocol. This is a millisecond arms race — no human operator can react at that speed.

Semi-Autonomous vs. Autonomous Drones — The Critical Spectrum: Here’s the distinction that defines the entire ethical debate. A drone can have:

- Level 1 - Autonomous Navigation: Flies alone, avoids obstacles, returns to base if signal is lost. Human controls targeting.

- Level 2 - Assisted Identification: The system suggests targets, human confirms.

- Level 3 - Delegated Authorization: Human approves target categories (“all T-72 tanks in this zone”), machine executes.

- Level 4 - Complete Autonomy: Machine decides what’s a valid target and when to attack. Not yet operationally verified, but technically feasible.

Most current systems operate at Levels 2-3. But the line between “assisted” and “autonomous” is blurred when the human only has 20 seconds to review an AI recommendation that processed 10,000 variables they could never manually evaluate.

Offensive/Defensive Cybersecurity: Automated penetration of enemy networks. Real-time threat detection in critical infrastructure. Some cyber defense systems must operate at machine speed — if malware propagates in microseconds, response can’t wait for human approval.

Propaganda and Disinformation: Generation of synthetic content (deepfakes, cloned audio) for psychological operations. Sentiment analysis on social networks to identify opinion leaders, measure civilian morale, detect resistance movements. This is NLP (natural language processing) and generative AI — ChatGPT applied to information warfare.

The Uncomfortable Truth About Precision: No AI system has 100% accuracy. Lavender admits approximately 10% error in target identification. That means of 37,000 names processed, potentially 3,700 were false positives. In medicine, a model with 90% precision might be unacceptable for critical diagnoses. In military targeting, 90% precision means one in ten bombings could be killing someone who shouldn’t die. And when that error multiplies across thousands of operations, civilian casualties become structural, not exceptional.

This is the technical reality that rarely appears in headlines: military AI doesn’t fail because it’s “evil” or “conscious.” It fails because it’s a statistical model trained on imperfect data, making decisions in environments of extreme uncertainty, operated by humans under pressure who trust its recommendations too much.

Who Builds the Weapons of the Future — And Who Pretends Not To

If military AI were the exclusive domain of obscure defense contractors and classified government agencies, this analysis would be less relevant. The problem is it’s not.

The companies developing military targeting systems share cloud computing providers, AI frameworks, model architectures, and sometimes even code bases with companies making fitness apps or product recommendation systems. The line between “civilian” and “military” technology blurred years ago.

The Explicit Players:

Palantir Technologies deployed its Metro platform in Ukraine in 2023, integrating Western intelligence data for predictive analysis and targeting recommendations. Metro correlates satellite imagery, intercepted communications, field reports, and historical patterns to generate real-time threat maps.

Anduril Industries develops autonomous drones like the Ghost and perimeter defense systems with automated identification and response capabilities. Founded by Palmer Luckey (creator of Oculus VR), Anduril openly positions itself as “the new defense contractor model” — Silicon Valley technology applied to national security.

Shield AI manufactures artificial pilots for military drones that can operate in environments without GPS or external communication — completely autonomous navigation and decision-making in denied environments. Their Hivemind system allows multiple drones to coordinate missions without constant human intervention.

The Israeli Ecosystem — Pioneers in Military AI:

Rafael Advanced Defense Systems and Elbit Systems are the confirmed developers of Gospel, Lavender, and Where’s Daddy — the AI-assisted targeting systems used extensively in Gaza. Where’s Daddy is particularly revealing: it tracks targets to their homes and recommends bombing them when they’re at home, under the logic that “they’re more likely to be there and collateral damage in public spaces is minimized.” The decision to attack someone in their home, potentially with their family, becomes an algorithmic recommendation.

Corporate Controversies:

In 2018, over 4,000 Google employees signed a letter demanding the company cancel Project Maven — a contract with the Pentagon to develop computer vision to help analyze drone video. Dozens resigned. Google eventually decided not to renew the contract and published its “AI Principles,” declaring it wouldn’t develop AI for weapons.

But here’s the nuance: Google Cloud still provides infrastructure to defense contractors. And its computer vision models, publicly available via API, can be used by third parties in military applications without Google having control over end use.

Microsoft won the $10 billion JEDI (Joint Enterprise Defense Infrastructure) contract with the Department of Defense in 2019. Microsoft employees also protested. CEO Satya Nadella’s response: “We chose to support the democratic institutions we chose as a country.” The contract was eventually canceled but replaced by JWCC (Joint Warfighting Cloud Capability), which includes Microsoft, Amazon, Google, and Oracle.

Amazon Web Services (AWS) has active contracts with the CIA and multiple defense agencies. It provides the cloud infrastructure where intelligence analysis systems run.

The Indirect Provider Dilemma:

Most AI technology is inherently “dual-use” — it can be applied to civilian or military contexts without significant modifications.

If someone develops:

- Computer vision for autonomous vehicles → can be used for attack drones

- NLP for social media sentiment analysis → can be used to identify dissidence or measure enemy morale

- Recommendation systems for e-commerce → the same architecture serves to recommend bombing targets

- Predictive analysis for logistics → can optimize military supply chains or anticipate troop movements

Government Projects and the Transparency Vacuum:

DARPA (Defense Advanced Research Projects Agency) has dozens of active programs in military AI: from human-machine collaborative decision systems (Mosaic Warfare) to autonomous sensor networks (OFFSET). They publish requests for proposals, but results are classified.

China and Russia develop equivalent or superior capabilities, but verifiable information is minimal. We know they exist through official statements and military exhibitions, but technical details are under total opacity.

The Emerging Ecosystem in Ukraine:

OCHI, a Ukrainian nonprofit, has collected 2 million hours of real combat video since 2022 — equivalent to 228 years of footage. This dataset is used to train models for military vehicle recognition, uniform identification, mine detection, tactical analysis. It’s the ImageNet of war.

Ukrainian startups are developing low-cost targeting systems, leveraging commercial hardware (modified DJI drones) and open-source AI models. The barrier to entry for developing an “intelligent kamikaze drone” dropped from millions of dollars to thousands.

The Technical Reality That Connects All This:

The same frameworks (PyTorch, TensorFlow), the same architectures (YOLO, transformers, diffusion models), the same cloud providers (AWS, Azure, GCP), and sometimes even the same datasets (COCO for object detection, ImageNet for classification) are used to develop both the app that tells you how many calories are in your food and the system that decides if the man in that satellite image is a combatant or civilian.

This isn’t conspiratorial criticism. It’s simply how technology works: tools are neutral, applications are not.

The Material Logic of War — And Why Ethics Can’t Ignore It

There’s a question that lawyers, philosophers, and military personnel have been trying to answer unsuccessfully for years: when an artificial intelligence makes a lethal error, who is responsible?

The engineer who trained the model? The military operator who approved the recommendation? The commander who authorized the system? The government that deployed it? The company that sold it?

The honest answer: current international legal framework has no clear answer. And that ambiguity isn’t a technical bug — it’s a feature of the system that allows everyone to share responsibility so diluted that no one really assumes it.

But there’s a deeper dimension that complicates any purely ethical discussion: the material reality of war as an existential problem.

The Acceptable Threshold Problem:

In software development, we talk about “accuracy,” “precision,” “recall” as performance metrics. A classification model with 90% accuracy is generally considered good. In some contexts — product recommendation, spam filters — even 80% can be sufficient.

But let’s apply that same metric to Lavender: 90% precision in target identification means 10% are errors. If the system processed 37,000 names, that’s potentially 3,700 people incorrectly marked as legitimate military targets. According to +972 Magazine reports, Israeli analysts knew about the error margin but considered it “acceptable” given the volume of targets they needed to process.

Here’s the irresoluble ethical dilemma: in any machine learning system, there’s a trade-off between precision and recall (exhaustiveness). You can have a very conservative model that rarely makes mistakes but misses many real targets (low recall), or an aggressive model that identifies almost all real targets but also generates many false positives (low precision).

In military context, which is worse? Letting real combatants escape (increasing risk to your troops) or bombing innocent civilians marked incorrectly? There’s no technical answer to that question — it’s a moral decision algorithms can’t make.

The Materialist Logic of Technological Advantage:

When two actors are in conflict and one adopts a technology that grants decisive advantage, the other faces a brutal choice: adopt the same technology or accept defeat. This isn’t philosophy — it’s applied physics. In a confrontation where one side can process intelligence 100 times faster, identify targets with greater precision, and coordinate attacks at machine speed, the side that voluntarily renounces those capabilities isn’t taking an elevated moral position. It’s choosing to lose.

Military history is full of examples: the English longbow at Crécy, gunpowder, the machine gun, aviation, nuclear weapons. Every time a technology demonstrated operational superiority, all relevant actors eventually adopted it — not from abandonment of principles, but from survival imperative.

Ukraine didn’t deploy 2 million drones because it philosophically approves of war automation. It did so because the alternative was ceding territory to an adversary with overwhelming numerical superiority. Israel didn’t develop Lavender out of fascination with AI, but because it faced the operational problem of processing intelligence on thousands of potential targets faster than human analysts could handle manually.

This doesn’t justify specific decisions about how these systems are used — bombing homes with families, accepting 10% error as “acceptable,” reducing human review to 20 seconds. But it does explain why military AI isn’t an optional luxury that countries can reject for ethical reasons: it’s a strategic necessity in any conflict where the enemy has it.

Evidence That Human Oversight Is Performative:

According to documentation from +972 Magazine verified by multiple sources:

- Israeli analysts spent “approximately 20 seconds” reviewing each Lavender recommendation before approving a bombing.

- The Where’s Daddy system tracked targets to their homes and recommended attacking them when “at home with their families,” under the argument it was the moment of greatest location certainty.

- Bombings were authorized with up to 15-20 “collateral damage” (dead civilians) to eliminate a single “junior operative” — essentially low-rank militants.

This isn’t surgical precision. It’s industrialized targeting with a veneer of human validation.

The Algorithmic Arms Race:

Even if a country decides unilaterally not to develop lethal autonomous systems for ethical reasons, it faces the prisoner’s dilemma: if its adversaries do develop them, it’s at potentially existential military disadvantage.

This is the logic that drove nuclear proliferation in the 20th century. Once a military technology is demonstrated as effective, all state actors eventually adopt it.

The difference with nuclear weapons: the entry threshold for developing military AI is orders of magnitude lower. You don’t need massive industrial infrastructure or internationally controlled materials. You need competent engineers, GPUs, and datasets — resources any medium-sized country or even sufficiently financed non-state actors can obtain.

From a materialist perspective, this isn’t pessimism — it’s recognition that war has always been a competition where technological advantage defines survival. Ethics can and should inform how technologies are used, what restrictions are imposed, what safeguards are built. But it can’t eliminate the reality that in an existential conflict, the side that unilaterally renounces decisive capabilities is choosing to lose.

The Real Danger: The War That’s Coming

Everything above — targeting systems, autonomous drones, 20-second decisions — is deeply concerning. But there’s something more fundamental almost no one is discussing: AI isn’t just changing how wars are fought. It’s changing what war is.

Since the creation of nuclear weapons and the deplorable demonstration of their power in Japan, total open war hasn’t occurred between great powers. The reason: mutually assured destruction. When everyone has the capacity to annihilate the adversary knowing they’ll be annihilated in response, large-scale conventional warfare becomes irrational.

This has given rise to proxy wars. Ukraine. Gaza. Syria. Yemen. Great powers avoid direct confrontation but sustain perpetual conflicts in others’ territories.

But AI introduces a new and dangerous variable in this equation.

The Strategic Invisibility of AI:

Unlike weapons of mass destruction that produce visible devastation, AI is discreet. It integrates transversally and horizontally into the apparatuses of war. It’s “invisible.” But its use dramatically elevates the effectiveness of war apparatuses.

This has a critical implication: AI can make war more effective without making it more visible. And that eliminates one of the most important historical brakes on conflict: the political cost of massive, visible violence.

When a nuclear bombing leaves a city in ruins and hundreds of thousands dead in an instant, the world reacts. There’s international pressure. Sanctions. Political consequences.

But when an AI system identifies and eliminates targets constantly, surgically, distributed over time — 20 seconds of review, a bombing, next target — violence becomes administrative. It normalizes. It becomes invisible.

Pre-Threat Warfare:

The great immediate risk of AI use in armed conflicts is that it will end war as we know it and replace it with a new type of war: constant, low-intensity, a war of control and censorship, a war of pre-threat.

In this new paradigm, AI integrated into intelligence systems identifies and eliminates or disables targets before they’re a threat. You don’t wait for the enemy to attack. You don’t wait for them to organize. You detect them in formative stages — communication patterns, associations, movements — and act preemptively.

This is already happening. Lavender doesn’t only identify active combatants. It identifies “junior operatives” — people the system predicts could become threats based on their associations and behaviors.

And this will work at all levels of human organization: from multinational blocs to local scope in cities and neighborhoods.

The Permanent State of Low-Intensity Conflict:

Military AI won’t culminate in an apocalyptic war of machines. It will culminate in eliminating the distinction between “peacetime” and “wartime.”

Instead of declared conflicts with beginning and end, we’ll have permanent surveillance, constant preventive targeting, and continuous selective elimination of potential threats. War will cease to be an extraordinary event and become a permanent background process of society.

Think about what this would look like:

- Urban surveillance systems identify “suspicious behavior patterns” based on models trained with data from previous conflicts.

- Social networks are constantly monitored for “early signs of radicalization” or “resistance organization.”

- People are marked as “potential threats” before committing any act — only for their associations, their readings, their private conversations.

- “Neutralization” of these threats requires no trial, no evidence of crime, not even demonstrable intent. Only the algorithmic prediction that they could, eventually, represent a risk.

This isn’t dystopian science fiction. It’s the logical extension of systems that already exist.

Erosion of the “Rules-Based Order”:

In the past two years, the idea of “rules-based international order” has completely collapsed, giving way to the order and rules of the strongest.

AI accelerates this process because:

-

It reduces the political cost of violence: If you can eliminate threats surgically and constantly without images of massive devastation, there’s less international pressure to stop you.

-

It distributes responsibility until invisible: When thousands of lethal decisions are made through automated systems with 20-second “human review,” who’s responsible? Everyone and no one.

-

It normalizes preventive violence: If your AI system can predict future threats with 90% precision, why would you wait for them to materialize? The logic of prevention becomes irresistible — and extends to increasingly broad categories of “potential threat.”

-

It creates permanent information asymmetry: Countries with better AI systems have advantages in intelligence, targeting, and decision-making that compound exponentially. This doesn’t just give them military power — it gives them power to define who is a threat and who isn’t.

The Fundamental Question No One Wants to Answer:

Military AI isn’t the real problem. The real problem is whether humanity as a whole can avoid total open war. The question is whether we’re ready to reject violence once and for all as a form of conflict resolution.

But here’s the trap: AI makes that question harder to answer, not easier.

Because AI enables a form of war that’s effective enough to sustain permanent domination, but discreet enough to avoid the political consequences that historically limited large-scale violence.

It enables a state of perpetual low-intensity conflict that never generates enough public outrage to stop it, but is lethal enough to suppress any real resistance.

And that, much more than autonomous drones or targeting systems, is what should keep us awake at night.

Regulation: Writing the Rules While War Happens

The Geneva Convention, the pillar of modern international humanitarian law, was drafted in 1949. The concept of “artificial intelligence” didn’t exist. The idea of a weapon that could identify and attack targets without continuous human intervention would have sounded like science fiction.

International weapons regulation is designed for an era when weapons were physical objects with static capabilities, not software systems that evolve with each update and whose capabilities depend on the data they’re trained with.

We’re trying to regulate 21st-century technology with 20th-century legal frameworks.

Campaign to Stop Killer Robots — The Most Serious Prohibition Attempt:

Since 2013, this coalition of over 250 NGOs in 100+ countries has pressed for an international treaty banning lethal autonomous weapons systems (LAWS). They’ve gotten 70+ countries to express support for some type of regulation or moratorium.

But the military powers that really matter — United States, Russia, China, Israel, United Kingdom, France — haven’t signed any binding commitment. And without them, any treaty is performative.

The United States maintains an official position that military AI must have “appropriate human control” — a deliberately vague term that doesn’t define what constitutes “appropriate” or who determines it.

Israel doesn’t even publicly acknowledge the existence of Lavender and Gospel as operational systems. Officially, they’re “decision support tools,” not autonomous weapons — though the distinction becomes semantic when they generate lists of 37,000 people to kill.

UN Conversations That Go Nowhere:

Since 2014, the Group of Governmental Experts on Emerging Technologies in the Area of Lethal Autonomous Weapons Systems meets periodically under the CCW (Convention on Certain Conventional Weapons) umbrella.

In over a decade of meetings, they haven’t achieved consensus on:

- A binding definition of what constitutes a “lethal autonomous weapon”

- What level of autonomy is ethically acceptable

- What “meaningful human control” means operationally

- How to verify compliance in software systems that can be remotely updated

The reason: fundamental divergence of interests. Europe favors strong restrictions. The United States and allies want flexibility to maintain technological advantage. China and Russia participate in conversations but don’t reveal their real capabilities or accept external verification.

The Legal Definition Vacuum:

There’s no international legal definition of “meaningful autonomy” in weapons systems. This isn’t an accident — it’s intentional. Ambiguity allows each country to interpret its obligations according to convenience.

In the context of the pre-threat war that AI enables, this ambiguity is even more dangerous. Because it allows surveillance and preventive targeting systems to operate in a permanent legal gray zone.

A drone that navigates autonomously but requires human approval to fire, is it autonomous? What if approval is for a “target category” instead of individual target? What if the human only has 20 seconds and no access to the algorithm’s justification?

These questions have no clear legal answers.

Reality: Technology Advances Faster Than Regulation:

By the time an international treaty is negotiated, ratified, and implemented — a process that can take decades — the technology it attempts to regulate is already obsolete.

LAWS don’t require fundamentally new research. They’re applications of existing technologies (computer vision, reinforcement learning, autonomous control systems) already widely available. The know-how is in academic papers, code on GitHub, models on HuggingFace.

Banning LAWS development at this point would be like trying to ban the internet: the technology is so distributed and dual-use cases so numerous that effective enforcement is practically impossible.

This doesn’t mean regulation is useless — it means it must be adaptive, focused on use and accountability instead of development prohibition, and probably more effective at national than international level.

But the fundamental problem persists: how do you regulate a system that operates at machine speed, in classified environments, with decision-making distributed between algorithms and humans whose review is performative?

There’s no satisfying answer. Only the certainty that while we debate the rules, operational reality is already writing them.

Total War Without the Nuclear Mushroom

In the end, there are types of wars. There’s diplomatic war, economic war, information war. And then there’s total open war — when all other forms of conflict resolution have failed.

Historically, total war had no rules. But after Hiroshima and Nagasaki, a tacit limit was established: mutually assured destruction made total war between great powers irrational.

AI is eroding that limit. Not because it makes total destruction possible — nuclear weapons already did that. But because it makes total domination possible without the visible destruction that historically activated political and moral alarms.

The war of the future won’t be the sudden extinction of entire cities in mushroom clouds of fire. It will be the constant, surgical, algorithmic suppression of any potential threat before it materializes.

It will be a war that never ends because it never really begins. That has no fronts because it’s everywhere. That has no clearly defined combatants because anyone can become a target based on statistical predictions.

And it will work at all levels: between multinational blocs competing for global hegemony, between states suppressing internal dissent, between local authorities controlling urban populations.

The Only Question That Matters:

Are we ready to reject violence once and for all as a form of conflict resolution?

Because if we’re not — and historical evidence suggests we’re not — then AI will simply make violence more efficient, more constant, more invisible, and harder to stop.

There won’t be a dramatic moment of recognition. There won’t be a war that “finally went too far.” Only the gradual normalization of a permanent state of low-intensity conflict where AI identifies threats, eliminates them, and moves to the next target.

That’s not a dystopian future. It’s the operational present of 2026, expanding.

And the most terrifying thing isn’t that technology makes it possible. It’s that the material logic of strategic competition makes it inevitable — unless humanity collectively decides that some capabilities, though technically achievable, shouldn’t be pursued.

But that decision requires global coordination, mutual verification, and willingness to accept tactical disadvantage in exchange for long-term collective security.

Nuclear weapons history demonstrates it’s possible — proliferation was limited (imperfectly) through treaties and mutual deterrence.

The difference: nuclear weapons require massive infrastructure and controlled materials. AI requires code and data already widely distributed.

That makes the regulation challenge exponentially more difficult.

And while we resolve it — if we resolve it — the war we knew is being replaced by something more insidious: a war without end, without fronts, without formal declarations, but with real, constant, and increasingly invisible casualties.

That’s the real implication of military AI almost no one is discussing.

And the collective silence on this topic — from governments, from international organizations, from academia, from the general public — is perhaps the most terrifying data of all.